Abstract: Despite remarkable progress in large language models, Urdu—a language spoken by over 230 million people—remains critically underrepresented in modern NLP systems. We introduce Qalb, an Urdu language model developed through a two-stage approach: continued pre-training followed by supervised fine-tuning. Starting from LLaMA 3.1 8B, we perform continued pre-training on a dataset of 1.97 billion tokens, achieving state-of-the-art performance with a weighted average score of 90.34, outperforming the previous best model by 3.24 points and demonstrating a 44.64-point improvement over the base model.

Why QALB Matters

We are thrilled to announce QALB, the largest state-of-the-art Urdu Large Language Model, setting a new benchmark in multilingual AI. Specifically optimized for Urdu, QALB addresses critical challenges in Urdu NLP and brings significant advancements in reasoning, fluency, and cultural alignment. This launch marks a significant milestone in making AI more accessible and accurate for 230 million Urdu speakers worldwide.

Developing a high-performing Urdu LLM presents several hurdles:

Critical Challenges in Urdu NLP

- Most multilingual LLMs struggle with Urdu, often producing inconsistent or extremely hallucinated responses. They also sometimes insert foreign characters during Urdu text generation.

- Lack of High-Quality Datasets: Urdu lacks a reliable, instruction-tuned dataset for effective training. Existing datasets are often small, noisy, or lack diversity.

- Translation Limitations: Direct translation is not enough, often resulting in fluency loss and cultural misalignment, highlighting the need for native Urdu data generation.

- Reasoning & Script Challenges: Urdu's right-to-left Nastaliq script conflicts with left-to-right reasoning tasks, while existing safety frameworks fail to align with regional requirements.

- Culturally-Aware AI is Crucial: There's a critical need for AI models that understand and respect the nuances of low-resource languages, including cultural context and literary traditions.

- Insufficient Pre-training Data: Even the base LLaMA-3.1 8B-Instruct model shows unsatisfactory performance on Urdu tasks, generating text that native speakers find unnatural due to insufficient exposure to quality Urdu data during pre-training.

How Our Approach Solves These Challenges

To overcome these challenges, we have designed QALB, a powerful Urdu-English model using systematic continued pre-training:

Our Systematic Two-Stage Approach

- Large-Scale Continued Pre-training: We perform continued pre-training on 1.97 billion tokens, comprising 1.84 billion tokens of diverse Urdu content from multiple domains (news archives, classical and contemporary literature, government documents, and social media) combined with 140 million tokens of English Wikipedia to prevent catastrophic forgetting.

- Enhanced Urdu Reasoning Capabilities: We integrate Urdu-native instruction fine-tuning on the Alif Urdu-instruct dataset, ensuring better contextual comprehension and making sentiment analysis, classification, and reasoning more precise.

- Optimized Training Pipeline for Efficiency: Our efficient and cost-effective training approach includes:

- Continued Pretraining: We leverage diverse Urdu sources to strengthen foundational knowledge of Urdu language.

- Fine-Tuning: The Alif Urdu-instruct dataset is used for supervised fine-tuning, ensuring the model functions as a helpful Urdu-speaking assistant.

- State-of-the-Art Performance on a Budget: By employing systematic continued pre-training, we have enhanced Meta Llama 3.1 8B's Urdu capabilities significantly. QALB now outperforms Meta Llama 3.1 8B Instruct in Urdu-specific tasks while maintaining strong English fluency. It also outperforms the previous state-of-the-art Alif-1.0-Instruct model by 3.24 points.

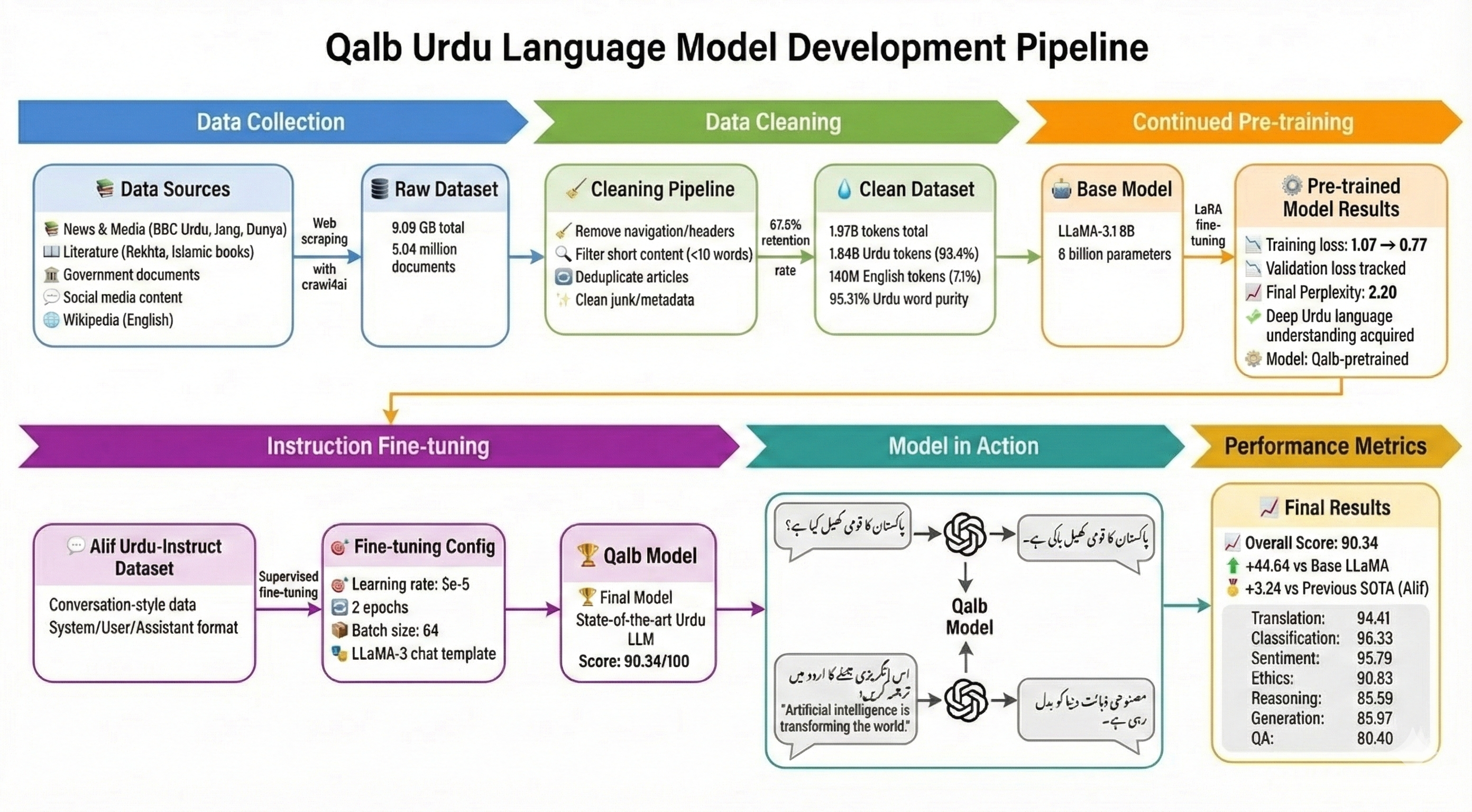

QALB Pipeline

Our systematic approach consists of three key stages: data curation, continued pre-training, and instruction fine-tuning. The following diagram illustrates our complete pipeline:

Dataset Construction

To ensure the efficient acquisition of high-quality, structured text data from web sources, we utilized the crawl4ai library—an open-source, asynchronous web scraping framework optimized for Large Language Model workflows. Unlike traditional static scrapers, crawl4ai leverages a headless browser architecture (via Playwright) to accurately render and capture dynamic content, including JavaScript-heavy pages.

Using this framework, we curated cleanest urdu dataset.jsonl, a massive corpus totaling 9.09 GB of text data. The final cleaned dataset contains approximately 1.97 billion tokens across 5.04 million documents.

Urdu Corpus (1.84 Billion Tokens)

The vast majority of our dataset consists of high-quality Urdu text:

- News & Media: Over 61 million words from major publications including BBC Urdu, Jang, Dunya News, and UrduPoint.

- Literature & Religion: Extensive volumes from Islamic Urdu Books, literary archives like Rekhta, and the Makhzan corpus.

- Specialized Domains: Sub-corpora for Sports, Entertainment, and Health.

- Government Documents: Official documents and publications.

- Social Media: Curated social media content to capture contemporary language usage.

English Corpus (140 Million Tokens)

We specifically integrated 140 million tokens of high-quality English text sourced from Wikipedia. This addition serves as a replay buffer to maintain the model's general reasoning capabilities and prevent the degradation of its original English performance—addressing the challenge of catastrophic forgetting.

Data Cleaning Pipeline

We implemented a rigorous multi-stage cleaning pipeline to ensure data quality:

- Navigation Removal: Stripping hundreds of identified footer and header patterns.

- Filtering: Removing records with fewer than 10 words or less than 50 characters to eliminate noise.

- Deduplication: Applying hash-based detection to remove duplicate articles across different news aggregators.

- Junk Removal: Cleaning numeric artifacts, timestamps, and non-Urdu metadata.

The final filtration process resulted in a retention rate of approximately 67.8%, ensuring only high-quality semantic content remained for training.

Training Methodology

Continued Pre-Training

Unlike training from scratch, which requires massive computational resources, continued pre-training leverages the general linguistic knowledge already encoded in a pre-trained model and extends it with target language data. This approach is particularly effective for low-resource languages like Urdu.

We perform continued pre-training on the unsloth/MetaLlama-3.1-8B base model using Low-Rank Adaptation (LoRA). Rather than updating all 8 billion parameters, LoRA introduces trainable low-rank decomposition matrices into the model's layers, significantly reducing memory requirements and computational costs while maintaining model quality.

Training Infrastructure

- Hardware: Single NVIDIA A100 80GB GPU

- Framework: Unsloth library—an optimized training framework combining memory-efficient attention mechanisms with fast LoRA implementations

- Precision: bfloat16 with gradient checkpointing

- Optimizer: AdamW-8bit

- Learning Rate: Cosine schedule with warmup ratio of 0.05

- LoRA Rank (r): 128

- LoRA Alpha: 32

- Trainable Parameters: ~1.18B (~14.72% of base)

- Effective Batch Size: 128

- Sequence Length: 2048

Instruction Fine-Tuning

After continued pre-training, we perform supervised fine-tuning on the Alif Urdu-instruct dataset:

- Configuration: Same LoRA setup (rank 128), learning rate 5e-5, 2 epochs, batch size 64

- Optimizer: AdamW-8bit with linear scheduling

- Prompt Format: Official Llama-3 chat template with distinct control tokens

- System Prompt: Explicitly instructs the model to function as a helpful Urdu-speaking assistant

Experimental Results

We evaluate QALB on a comprehensive Urdu evaluation suite covering seven diverse tasks:

- Generation: Creative and factual text generation

- Ethics: Moral reasoning and ethical judgment

- Question Answering: Factual knowledge retrieval

- Reasoning: Logical and commonsense reasoning

- Translation: Urdu-English bidirectional translation

- Classification: Text categorization tasks

- Sentiment Analysis: Emotion and opinion detection

Performance Comparison

QALB establishes a new State-of-the-Art for Urdu Language Modeling, achieving an overall weighted average score of 90.34, significantly outperforming the previous best model, Alif-1.0-Instruct (87.1), by 3.24 points.

| Model | Generation | Translation | Ethics | Reasoning | Classification | Sentiment | QA | Avg. Score |

|---|---|---|---|---|---|---|---|---|

| LLaMA-3.1-8B-Inst. | 42.8 | 58.9 | 27.3 | 45.6 | 61.4 | 54.3 | 30.5 | 45.7 |

| Alif-1.0-8B-Inst. | 90.2 | 89.3 | 85.7 | 83.5 | 93.9 | 94.3 | 73.8 | 87.1 |

| QALB (Ours) | 85.97 | 94.41 | 90.83 | 88.59 | 96.38 | 95.79 | 80.40 | 90.34 |

Key Improvements

- Massive improvement over base model: QALB achieves a 44.64-point improvement over the base LLaMA-3.1 8B-Instruct model (45.7 vs 90.34), demonstrating the critical importance of continued pre-training on Urdu data.

- Outperforms Alif on 6 out of 7 tasks: The largest gains are in QA (+6.6 points), Translation (+5.11 points), and Reasoning (+5.09 points). QALB also achieves substantial improvements in Classification (+2.48 points) and Sentiment Analysis (+1.49 points).

- State-of-the-art performance: QALB establishes new benchmarks across multiple Urdu NLP tasks, proving the effectiveness of our continued pre-training methodology.

Impact and Significance

QALB represents a significant milestone in Urdu natural language processing, addressing the critical gap in language model support for one of the world's most widely spoken languages. With 230 million speakers across Pakistan and global diaspora communities, Urdu has long been underserved by modern NLP systems.

Our work demonstrates that continued pre-training on substantial, diverse language data is essential for building effective language models for low-resource languages. The methodology we present is reproducible and can guide similar efforts for other underserved languages, making AI more accessible globally.

By achieving state-of-the-art performance across multiple Urdu benchmarks, QALB enables new possibilities for:

- Natural language interfaces in Urdu

- Educational technology for Urdu-speaking communities

- Preservation and digitization of Urdu literature

- Cross-lingual applications and translation

- Cultural and linguistic research

What's Next?

- Gather more diverse Urdu data to enhance the model's knowledge and understanding.

- Apply Model Merging and other RL techniques to improve bilingual and reasoning capabilities.

- Conduct further evaluations and benchmarking on additional Urdu-specific tasks.

- Release model weights and datasets to the open-source community.

QALB is a monumental step forward for Urdu NLP, ensuring cultural and linguistic alignment while expanding bilingual AI capabilities. Stay tuned for more updates as we continue to push the boundaries of AI innovation!

Conclusion

We present QALB, the largest state-of-the-art Urdu Large Language Model, developed through systematic continued pre-training on 1.97 billion tokens followed by instruction fine-tuning. QALB achieves a weighted average score of 90.34, outperforming the previous state-of-the-art by 3.24 points and demonstrating a 44.64-point improvement over the base model.

Our results validate that continued pre-training is essential for building capable language models for low-resource languages. The comprehensive evaluation across seven diverse tasks demonstrates QALB's superior performance in understanding, generating, and reasoning about Urdu text.

This work contributes to making AI more accessible to Urdu speakers worldwide and provides a replicable framework for adapting foundation models to other low-resource languages. We hope that QALB will serve as a foundation for future research and applications in Urdu NLP.

Citation

If you use QALB in your research, please cite:

@article{qalb2025,

title={Qalb: Largest State-of-the-Art Urdu Large Language Model for 230M Speakers with Systematic Continued Pre-training},

author={Hassan, Muhammad Taimoor and Ahmed, Jawad and Awais, Muhammad},

journal={arXiv preprint arXiv:2601.08141},

year={2026},

eprint={2601.08141},

archivePrefix={arXiv},

primaryClass={cs.CL},

url={https://arxiv.org/abs/2601.08141},

doi={10.48550/arXiv.2601.08141}

}

arXiv: 2601.08141 [cs.CL]

DOI: 10.48550/arXiv.2601.08141

Submitted: January 13, 2026

Acknowledgments

We thank the open-source community for the tools and frameworks that made this work possible, including the Unsloth library, Meta's LLaMA models, and the Alif team for their Urdu instruction dataset. Special thanks to the Urdu-speaking community for their continued support and feedback.

For questions, collaborations, or access to the model, please contact the authors or visit the arXiv paper.